Supply Chain Simulation vs. Optimization

April 13, 2021

How COVID-19 Impacted the Supply Chain

April 27, 2021A few years ago I was working at a pharmaceutical manufacturing plant as a process improvement manager and my role was to streamline material flow and reduce inventories. We were running a pilot using the rhythm wheel concept to optimize the production sequence and load leveling of one of the tablets blistering and packaging lines.

Now the focus was to streamline the flow of products in the tablet and capsules manufacturing department.

The Analysis

A walk-through in the production area showed that there were some major issues. There were batches of work in progress (WIP) stored in drums everywhere in the corridors of the production department. It was nearly impossible to get an overview of what was around.

So I took a whiteboard, and I designed all the manufacturing workcenters (granulators, mixers, tablet presses, capsule fillers, tablet coaters). Next, I placed a post-it for all the batches that were in the queue, waiting to be processed. Looking at the array of post-its, the full picture emerged: there were more than one hundred batches in process at any time.

After a few days I replaced the post-it notes with customized cards with different colors for different products. I would annotate the work-order number on each of them. Every morning I would update the board by moving the cards as the batches progressed through production and then take a picture of the situation.

After a couple of weeks I could use the photo sequence to create movies of the batches flowing through production. There was very little flow. Every day only a few – typically less than 10 – batches shifted position. Some batches would not move for more than two weeks, always waiting in front of a workcenter to be processed.

What was going on?

Scheduling

The planning and scheduling of the manufacturing department were done by a senior planner with years of experience using Excel spreadsheets. Every Friday he would spend the entire day creating the detailed manufacturing schedule for the following week. Based on his knowledge he could allocate and sequence the production orders to the right equipment. The manufacturing environment was very complex.

Some manufacturing steps could theoretically be performed by different workcenters but because of GMP validation rules there were constraints on which product could be manufactured on which workcenter. When confronted with the data showing that some batches were waiting on the floor for weeks without flowing, his response was that it would use them as an asset to provide him flexibility. On average it took a batch several weeks– sometimes over a month to traverse through the manufacturing process.

One of the primary reasons was due to the strategy chosen to schedule the shop floor. Historically the two main granulators were the bottleneck of the manufacturing process. Since the granulation operation is the first step of the manufacturing process, the bottleneck arose at the very start. The philosophy of the company had been this: the granulator must always be busy. Indeed, this was the approach used in scheduling. Step number one was to fill the granulators at capacity. However, as time developed and product mix changed, the constraint moved from the granulation operation to other operations further downstream in the process, namely tableting and coating. This created predictable clogging and queueing.

An Unexpected Development

One day I received an invitation from the engineering department to attend a project presentation related to the transformation of the solid manufacturing department. This was a major engineering project aiming at increasing the safety of the workers by eliminating all contacts between the people and the products. This type of contact typically happened when loading and unloading machines with products.

I attended the presentation and very quickly realized that we had a major issue. The solution to remove exposure was that every batch in manufacturing would be moved through a high tech bin. These bins would accompany the manufacturing batches through the production process.

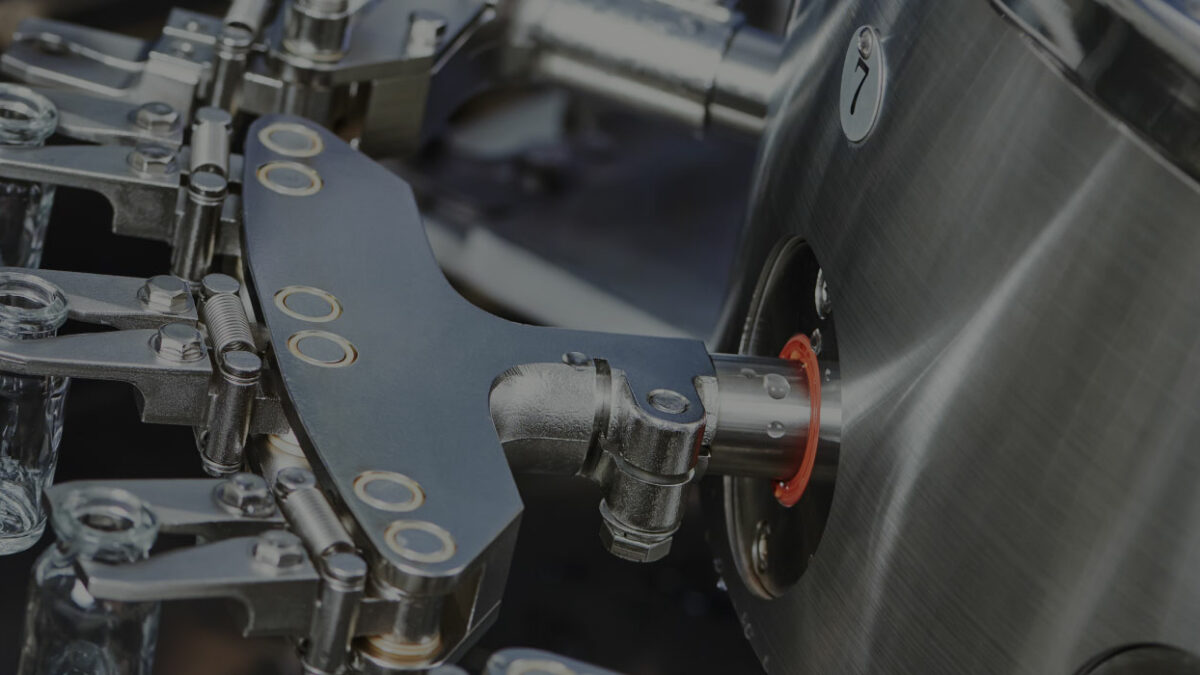

To start, you fill the bin with the raw materials and you connect it to the top of the granulator through a sealed connection (imagine a space capsule docking at the International Space Station). The raw material is then emptied into the granulator. After the granulation operation, the same bin is connected at the bottom of the granulator and the processed material is emptied into it. The same procedure happens every step of the process. In the end, the bin is washed and it’s ready for the next batch.

What nobody realized is that this procedure would effectively implement a pull process called CONWIP, short for CONstant Work In Progress. Some definitions here: pull is when you start a manufacturing operation based on a signal coming from the operation itself instead of a predefined schedule. The pull signal indicates that some specific conditions are met. CONWIP is the specific pull method when the signal is given by the numbers of batches in progress within a process. In this specific case you can only have so many batches in progress as you have bins, and you can only start manufacturing a new batch when a bin becomes available again. Since these bins are expensive pieces of technology the project was budgeted for an initial quantity of about 20 of them. This means that you can have at most 20 batches in progress.

Remember that the way we were working at the time, we had one hundred or more batches in progress. It was obvious that this would have changed the game for scheduling completely as the number of bins introduced a new constraint. There was no way we could effectively schedule manufacturing using the current approach– not without incurring massive losses in throughput. We needed a solution and we needed it fast.

Uncovering The Solution

I figured there were two possible ways to solve the dilemma: a low-tech, lean solution involving a two-level set of kan-ban cards to manage the schedule or a high tech solution, the creation of a digital replica of the entire manufacturing environment, using multi-constrained optimization algorithms to build the optimized schedule.

I discarded the first approach; it could have worked, but it wasn’t practical. Explaining it conceptually and maintaining it in the long-term were both daunting prospects. It also just wasn’t a cultural fit with the company.

Since the philosophy of the organization reflected a strong belief in technology, the second option was a better match. Besides, I was familiar with a genetic optimization software that would be perfectly suited to the task, and we had the resources in house to build the necessary digital twin model. With that in mind, we created a multidisciplinary team and built the digital twin with a high level of detail and granularity, containing all the rules and constraints that impacted operations.

The solution also impacted the work organization. Before the project the planning department was issuing the weekly schedule to production using its best understanding of all constraints. Most people in this environment know that a detailed schedule can be maintained for the first couple of days, but by Wednesday delays and issues have started accumulating, and the schedule breaks down: first a piece of equipment breaks down and needs repair, then a material is missing and some production step must be postponed, etc. During the second half of the week the production managers take things into their own hands, problem-solving and adapting the schedule. This generally works because they are the most knowledgeable about what can and cannot be done in their production department.

The new solution would take advantage of this fact; the new scheduling optimizer tool would be managed by the production department itself, not by planning. So the planning department in the new set-up would limit itself to communicating what batches were required and by when, and then the production department would use that information to create the optimized schedule using the optimizer. If during the week some unexpected event occurs, like a major equipment breakdown, then the production manager would just feed the information about the downtime of the equipment into the optimizer and re-run the optimization.

Results

The introduction of the digital twin schedule optimization was a major success.

When asked about it, the manufacturing managers were thrilled that finally, they had schedules that they could truly follow. The throughput time (or cycle time) went down dramatically as predicted by the Little’s Law formula which states that Work In Progress (WIP) equals Throughput multiplied by Cycle time. This is pretty intuitive: If my Throughput is 5 batches per day and it takes 7 days to manufacture a batch, then I will have a WIP of 35 batches in progress in the production department.

This applies very well in a CONWIP environment and you can solve the formula by Cycle Time = WIP / Throughput. Since in CONWIP you fix the WIP, then in practice you control the Cycle Time. Of course, there is a level of critical WIP under which this doesn’t work anymore, because then you start losing throughput as well: you have so few batches that some critical resources will be idle due to lack of material to work on. Here is where the optimization plays a major factor to ensure that even with a level of WIP close to the critical WIP, all workcenters are optimally loaded to achieve the highest throughput, and therefore the fastest cycle time.

Ready to find out what computer modeling can do for your supply chain? Running simulations is a fundamental component of SCP, but your learning shouldn’t stop there. Booking a free consultation will let you know more about it.